You will hear the term “cloud computing” or “in the cloud” and many similar variations and the biggest problem is that a lot of the people using such phrases haven’t a clue what they mean. The first big question is why “cloud”? The answer is that it is usual to represent the internet as a cloud in network and other diagrams and hence “computing in the cloud” basically means internet-based or hosted computing. Why the cloud metaphor was invented and then universally adopted is a mystery but we seem to be stuck with it.

Another big problem is that cloud computing is very easy to confuse with other related but distinct and usually more complicated ideas. For example cloud computing isn’t the same as parallel distributed computing and it isn’t the same thing as “the grid”. Things tend to get complicated when parallelism enters the picture so in this sense it’s a good thing that cloud computing isn’t an attempt at building interactive linked parallel systems. However without the attempt at parallelism the cloud really hasn’t got much going for it technically speaking – it’s just a collection of computers connected by a networkl, aka the Internet.

To understand some of the cloud based ideas consider how we run applications in a non-cloud based way. You write an application, store it on a local computer, run it locally and use it locally. If you don’t have the hardware to run the application in the room that you want to use it in then there is nothing you can do about it. Now consider your application implemented as a Web application. You now store it on a Web server, which can be situated anywhere in the world, and you can access the web application from any location that has the minimal hardware necessary to access the web, i.e. a browser or custom client. This is really all that cloud computing is about; put simply it is “hosting” on steroids.

Hosting is the way that most small to medium businesses have long chosen to create their web sites. Instead of having to build a data centre they use one that is already set up. There are lots of server hosting companies and the idea is very obvious and not very new. Seen from this perspective cloud computing is a remarketing of Web 2 with some additional customisation to try to get the best out of the architecture.

So by this analysis web applications like GMail and Google Apps are cloud applications and so are any similar web services you might implement. So all this sounds as if there is nothing at all new here and there is a very real sense in which this is true. You could compare it to the invention of the clever name “Ajax” for a group of web technologies that could be used together in a particular way. Nothing new was invented but something was definitely added by the introduction of the term. It gave developers a new target to aim at and a language that could communicate what they were trying to do. So it is with cloud computing – only in the case of Microsoft’s efforts even more so because as well as some new jargon we have some new facilities that make the new target application type even more clearly defined.

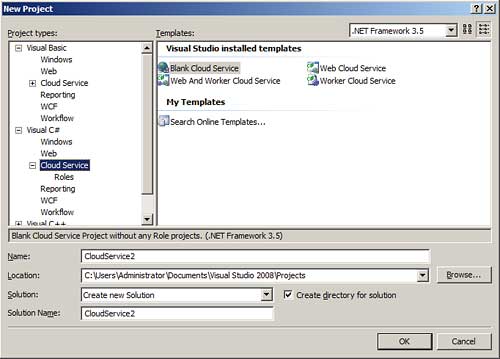

Azure brings new VS templates

Virtual

Before looking at Azure it is worth looking at other cloud computing offerings and see what approaches are the norm. In particular Amazon has a system that could well be seen as the major competitor to Azure. Amazon Elastic Computer Cloud (Amazon EC2) is basically a facility whereby you can run a virtual machine on Amazon provided hardware. The whole idea of virtual machines is central to some of the ways that cloud computing is implemented and the facilities it provides. In case you’ve missed the idea, a virtual machine is represented as a file on disk that contains all the data, programs and state information of the machine when it was last run. A virtual machine application such as VMWare, Virtual PC or Virtual Server can load the virtual machine image and run it along with as many other virtual machines as the physical hardware can handle.The reasons why a virtual machine is ideal for cloud computing are not difficult to see. You can load a virtual machine onto any hardware you happen to have free; a virtual machine always has a standard hardware configuration emulated by the virtual machine platform; you can transfer a virtual machine image from one location to another by file transfer and finally you can run multiple copies of the virtual machine to meet the current client demand. Of course the downside is that every virtual machine has a complete copy of the operating system and any applications software it needs to function. This is not a very efficient approach to any problem but it is incredibly easy.

As you need multiple copies of operating systems to make the virtual machine cloud computing idea work it’s not surprising that open source operating systems and server applications are the natural choice as there are no licensing difficulties. Once you have an image of a Linux-based virtual server you can create as many copies of it as you like simply by file transfer and starting the machine on suitable hardware. Amazon EC2, for example, uses Amazon Machine Image (AMI) format virtual machines a range of Linux options of your choice or recently introduced Windows 2003. Basically all you have to do to get started with EC2 is create a suitable virtual machine, create and install the application you want to run on it and then upload the machine’s image to the EC2 system. There are even predefined AMIs with standard machine and software configurations ready for you to download, customise and then upload. Of course what you pay for running an instance of your virtual machine for an hour depends on its hardware profile and what commercial software is installed on it. You can guess that using Linux and MySQL on a one-core machine is cheaper than opting for Windows 2003 and SQL Sever. Notice also that the up-front cost is very low – perhaps even zero depending on how you account for it. Compare the idea of setting up a data centre with 1000 servers running your software to meet a guessed at peak demand compared to the 11 cents per hour (13.5 cents for Windows) EC2 charges for each server instance. This doesn’t mean that your overall bill will be low, however, because the charges go up according to the hardware specification of the virtual machine, how much data it transfers and what software it uses. Also notice that you get backup and security implemented by the remote data centre.

Once you understand the role the virtual machine plays in EC2, it all seems very simple. Notice that there is no real restriction on what the AMIs are doing – although most will be web applications they don’t have to be. If you want to create an application that computes the digits of Pi then you can and run as many copies of it for as long as you can afford. Notice that this raises the question of how AMIs can interact and you can see that the answer is that they don’t interact in any way that goes beyond how any standard machine can interact with any other. That is, cloud computing is not parallel or grid computing. If you want the machines that are computing Pi to divide up the task then it’s up to you work out how to do it and how the machines should communicate. The problems that you have are exactly the same as if you had built the hardware needed for the job – the only difference is that the cloud computing machines are virtual. That is, the scalability of cloud computing solutions is just the same as the server or application’s scalability but you don’t have to buy new hardware to make it happen. One new feature, however, is that by paying for only what you use you can tailor the amount of computing power you throw at the system according to demand and this introduces the idea of dynamic scalability.

The virtual machine flavour of cloud computing has been used by just about everyone else offering such a service. For example IBM has Blue Cloud using virtual Linux machines and many traditional web hosting companies offer similar services. It seems that anyone with a data centre and excess capacity finds it attractive to offer to host your virtual machines. However, not all cloud computing uses the virtual machine approach in this clear and obvious way. Google for example does have a cloud computing system, but only if you are happy to code in Python. It’s not really a virtual machine approach, more a way to allow you to run your programs on their hardware. Interestingly Azure can be seen in the same light as it doesn’t make anything of the virtual machine idea instead preferring to describe what is going on as providing a cloud based operating system for a particular flavour of applications.

In addition to simply hosting virtual machines, some more ambitious cloud computing systems also offer “cloud services”. For example, while you can simply use storage within the virtual machine via a database or the operating system’s file services (just as if the machine was physical) you might want to use storage located on the web that is easier to share between multiple instances of the machine. Other such cloud services include queue servers, database servers, payment systems and just about any other webservice that could prove useful.

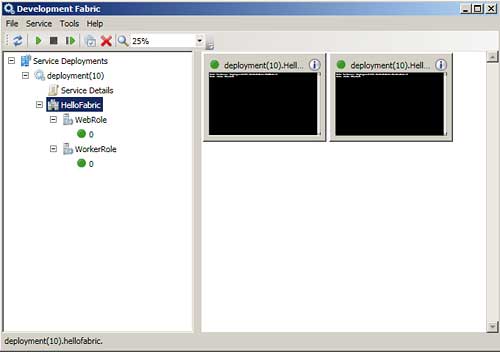

The new role manager lets you see and control running applications

Azure

Azure Services Platform is Microsoft’s offering by way of cloud computing and at the moment it doesn’t seem to be just a simple system for hosting a virtual machine like Amazon’s EC2. The biggest difficulty in finding exactly what we are talking about is the way that Microsoft has tipped everything on top that is even vaguely relevant. There are lots of layers offering services such as storage, Biztalk and everything with “Live” in its name and some of these might be relevant to what you eventually attempt to build – but at the start of the process this clutter definitely makes it difficult to see what the underlying architecture and approach are all about.To find out, the simplest thing to do is focus in on the Azure core itself. You can download an early CTP of the Windows Azure Software Development Kit and the Windows Azure Tools for Microsoft Visual Studio from the Microsoft website. It is important to know that you need both of these to try out Azure. The SDK provides the runtime system and the tools add-on to Visual Studio to allow you to create projects that target the run time. To make use of them you need to install both on a system that is running Windows 2008 or Vista with Visual Studio and IIS 7. You also need a copy of SQL Server Express 2005 or 2008 – full SQL Server isn’t suitable at the moment.

What we have at the moment is more like a system that allows you to build and run web applications than a completed hosting environment. For example there is no clue as to how you would publish or manage an Azure application on a distant cloud based host. Everything is performed locally via the usual desktop interface. Current information suggests that the cloud version will use Windows 2008 to host the Azure “fabric” and this will provide at least the facilities in the CTP. Multiple instances of the application will be able to run with the help of a load balancer and all responding to the same URL. This sounds a lot like a web server farm. What isn’t clear is how the isolation between the different users of the service is maintained. In virtual machine based systems the distinction between users is provided by the running instances. In the case of Azure the distinction seems to be at the application level – i.e. multiple web applications running under a single operating system and a single web server rather than one virtual machine per application. Again it is important to stress that this could change as the current documentation says that the “development fabric” simulates the “Windows Azure fabric” and this is a very big distinction from it being the actual Windows Azure fabric.

As with all new systems we have some new jargon. Azure uses “roles” to mean some sort of application that runs under the fabric. Currently we have two supported types of role. The web role is just a web application using either HTTP or HTTPS and a subset of ASP.NET and WCF. A worker role is a more general application that doesn’t support an HTTP/S connection and interacts with some of the other services provided by the fabric – storage and queue. A service can have one web or one service role or one of each.

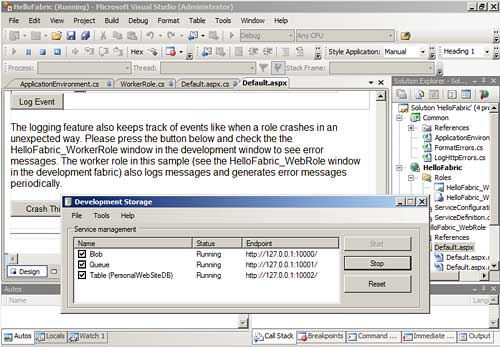

To build a web role you simply select the appropriate template when you start a new project. You can currently work in C# or VB but Ruby, F# and Java support is available. The new project is essentially an ASP.NET project with a few additional XML configuration files in Roles folder. A default .aspx page is provided with a code behind file in the language of your choice. From this point on you can work with the page as if you were building an ASP.NET page complete with a set of controls to drag-and-drop onto the page. If you run the application then apart from the availability of two new tools – a Fabric UI utility and a Storage UI utility – there is not much to notice that is different. Of course as the idea is to make remote and local execution identical this isn’t necessarily a criticism!

The documented “fabric” API is very small consisting of ILocal, an interface that controls local non-persistent storage and three classes RoleEntryPoint, RoleException and RoleManager all focused on managing a role – start, stop, initialise, status etc. It isn’t currently very clear what the accepted way for roles to communicate will be but the documentation states that all roles can make outbound HTTP or HTTPS connections and make use of low level TCP/IP sockets if required, however only web roles can act as listeners. It seems that beyond this communication is to be via shared storage and the few common fabric API calls that are available.

The documentation mentions the fact that the ASP.NET application has some restrictions placed on it without going into detail as to what these are. It seems fairly reasonable to suppose that the restrictions relate to classes and controls that provide facilities supported by the Windows API such as File Systems, Security, Registry, etc as these are likely to belong to the host machine. However not having access to local storage is going to be a problem, hence the introduction of Azure Storage Service, which is the single undeniably new feature of the Azure environment. The first shock is that this is implemented as a REST web service rather than using SOAP. The second shock is that no standard client libraries are provided unless you count the code provided as part of the samples.

REST is a simpler and more direct approach to implementing a web service and is supported by WCF and so is probably a good idea, unless you have invested heavily in mastering SOAP. The storage sample actually comments the classes provided to work with the REST interface as an “API” and this does indeed need to be elevated from a sample to a supported API. Currently all storage is provided by SQL Express, which is another thing which has to change in the future. Development storage allows only a single fixed account and doesn’t scale in the same way that a production system is promised to.

Three storage services are provided – Blob, Queue and Table. As you can probably guess, Blob storage allows a role to store almost anything and regards it as a Binary Large OBject. You define a container complete with metadata and can then store multiple Blobs within it. Stored Blobs extend the REST URI naming and this means that to get at a Blob you need to know and use its URI as if it was a “path” name. Thus Blob storage can be used to mimic a traditional file system. The only complication from the programming point of view is that Blobs are limited to read/write in units of 64Mbytes. You can create Blobs bigger than this but only by working in 64MByte blocks. The current maximum Blob is 50GBytes.

The Queue provides a persistent messaging system which can be used for interprocess communication. You can create queues and add and remove messages from them in a producer consumer type model. Currently messages have to be smaller than 8KBytes.

Table storage, as its name suggests, allows you to store tabular data complete with rows and keys. However, this isn’t a full relational database as provided by SQL Server. You can work directly with the REST API, use the sample class wrappers or work with ADO.NET Data Services (and hence LINQ).

The storage manager is the other obvious new feature

The future is…?

Overall you can’t help but be confused by the range of services that Azure offers and sets up as alternatives to already existing technologies – specifically SQL data services. There are advantages to the Azure storage system, it’s simple and Microsoft claims that it will be efficient and scalable. However, Azure storage is new, limited and seems to be inventing the wheel, albeit with a slightly different shape, yet again.Turning to the bigger picture it is difficult to think of what you could do with an Azure application that you could not already do with ASP.NET, web services and so on. This isn’t perhaps unreasonable as Azure isn’t a revolution in application type, more in application hosting. However, even here there isn’t even the clear-cut distinction between “cloud hosting” and traditional web server hosting. If you want to host an ASP.NET application on the “cloud” why not use a traditional hosting service complete with “cloud storage” in the form of SQL Server or perhaps SQL data services or an alternative?

What Azure does provide is a “pattern” for creating an application that will run in a hosted environment. This almost comes down to the fact that now there is a Visual Studio template for the project type and so developers will consider using it rather than, say, an ASP.NET application with hosting specifications to be sorted out at a later date. With an Azure application you know how it is going to be hosted and you don’t even need to shop around. This brings us to cost. Microsoft’s plan to charge on a per-use basis and what the actual cost is will either sink Azure as a platform or make it so attractive it can’t be ignored. Only time will tell.

Harry Fairhead is a consultant who advises on networking and computer hardware platforms, which now encompass virtual computing and computing in the cloud.

Comments